Indicates mpi4py is properly communicating with MPI and is running processor 2 of 3 :: processor 0 of 1 :: processor 2 of 3 :: 10 cells on processor 0 of 1 WARNING : There were 4 Windows created but not freed. processor 1 of 3 :: processor 0 of 1 :: processor 1 of 3 :: 10 cells on processor 0 of 1 WARNING : There were 4 Windows created but not freed. Mpi4py PyTrilinos petsc4py FiPy processor 0 of 3 :: processor 0 of 1 :: processor 0 of 3 :: 10 cells on processor 0 of 1 WARNING : There were 4 Windows created but not freed. Solve in parallel is to run one of the examples, e.g.,: The easiest way to confirm that FiPy is properly configured to Should develop an understanding of the scaling behavior of your own codes These results are likely both problem and architecture dependent. MPI Ranks for caveats and more information. We don’t fully understand the reasons for this, but there may beĪ modest benefit, when using a large number of cpus, to allow two to Thread is faster than with two threads until the number of tasks is more Until the number of tasks reaches more than 20.

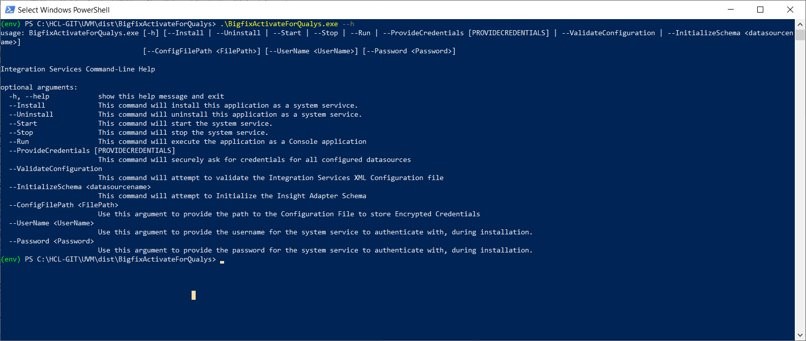

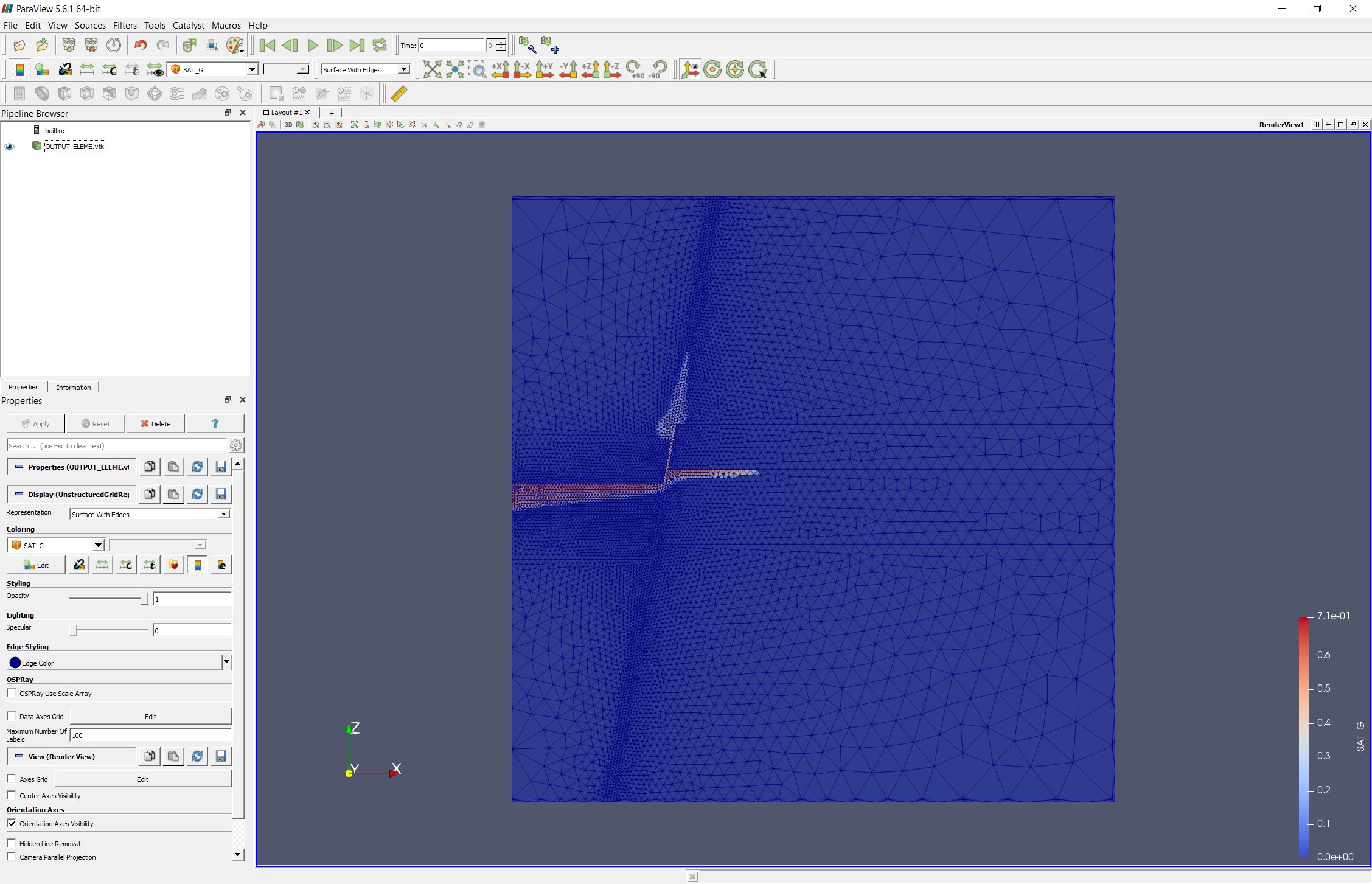

Number of tasks reaches about 10 and is faster than with four threads PETSc with one thread is faster than with two threads until the Uses and it is not always obvious which of several possibilities a Meaning of the solution tolerance depends on the normalization the solver Have different default solvers and preconditioners. Packages are not all doing the same thing. Some of this discrepancy may be because the different PETSc and Trilinos have fairly comparable performance, but Gmsh.” meshes partition more efficiently, butĬarry more overhead in other ways. Slabs, which leads to more communication overhead than more compact Grid.” meshes parallelize by dividing the mesh into At least one source of this poor scaling is MPI rank is indicated by the line style (see legend).Ī few things can be observed in this plot:īoth PETSc and Trilinos exhibit power law scaling, but the Number of tasks (processes) on a log-log plot. “Speedup” relative to Pysparse (bigger numbers are better) versus Scaling behavior of different solver packages ¶ We compare solution time vs number of Slurm tasks (availableĬores) for a Method of Manufactured Solutions Allen-Cahn problem. The following plot shows the scaling behavior for multiple Currently, the only remaining serial-only meshes are Solving in Parallel ¶įiPy can use PETSc or Trilinos to solve equations in PETSc solvers in order to see what options are possible. If present, causes lazily evaluated FiPy Variable objects to If present, causes the inclusion of all functions and variables of the The special value of dummy will allow the script If present, causes the linear solvers to print a variety of diagnosticįorces the use of the specified viewer. “ trilinos”, “ no-pysparse”, and “ pyamg”. (case-insensitive) choices are “ petsc”, “ scipy”, “ pysparse”, Usefulįorces the use of the specified suite of linear solvers. That produced a particular piece of weave C code. If present, causes the addition of a comment showing the Python context If present, causes many mathematical operations to be performed in C, Setting the value to “ print” causes the matrix to be Setting the value to “ terms” causes the display of the matrix for each If present, causes the graphical display of the solution matrix of eachĮquation at each call of solve() or sweep(). You can set these variables via the os.environ dictionary,īut you must do so before importing anything from the fipy You are invoking FiPy scripts from within IPython or IDLE), If you are not running in a shell ( e.g., You can set any of the following environment variables in the mannerĪppropriate for your shell. skfmm ¶įorces the use of the Scikit-fmm level set solver. lsmlib ¶įorces the use of the LSMLIB level set solver. pyamg ¶įorces the use of the PyAMG preconditioners in conjunctionįorces the use of the pyamgx solvers. no-pysparse ¶įorces the use of the Trilinos solvers without any use ofįorces the use of the SciPy solvers. trilinos ¶įorces the use of the Trilinos solvers, but uses The following flags take precedence over the FIPY_SOLVERSįorces the use of the Pysparse solvers. Requires the weaveĬauses lazily evaluated FiPy Variable objects to retain theirĬauses lazily evaluated FiPy Variable objects to always recalculate Causes many mathematical operations to be performed in C, rather than

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed